Apache Kafka-Spring Kafka生产消费@KafkaListener源码解析_spring kafka生产者源码-程序员宅基地

技术标签: spring KafkaListener 【MQ-Apache Kafka】 kafka

文章目录

概述

【依赖】

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka</artifactId>

</dependency>

【配置】

#kafka

spring.kafka.bootstrap-servers=10.11.114.247:9092

spring.kafka.producer.acks=1

spring.kafka.producer.retries=3

spring.kafka.producer.batch-size=16384

spring.kafka.producer.buffer-memory=33554432

spring.kafka.producer.key-serializer=org.apache.kafka.common.serialization.StringSerializer

spring.kafka.producer.value-serializer=org.springframework.kafka.support.serializer.JsonSerializer

spring.kafka.consumer.group-id=zfprocessor_group

spring.kafka.consumer.enable-auto-commit=false

spring.kafka.consumer.auto-offset-reset=earliest

spring.kafka.consumer.key-deserializer=org.apache.kafka.common.serialization.StringDeserializer

spring.kafka.consumer.value-deserializer=org.springframework.kafka.support.serializer.JsonDeserializer

spring.kafka.consumer.properties.spring.json.trusted.packages=com.artisan.common.entity.messages

spring.kafka.consumer.max-poll-records=500

spring.kafka.consumer.fetch-min-size=10

spring.kafka.consumer.fetch-max-wait=10000ms

spring.kafka.listener.missing-topics-fatal=false

spring.kafka.listener.type=batch

spring.kafka.listener.ack-mode=manual

logging.level.org.springframework.kafka=ERROR

logging.level.org.apache.kafka=ERROR

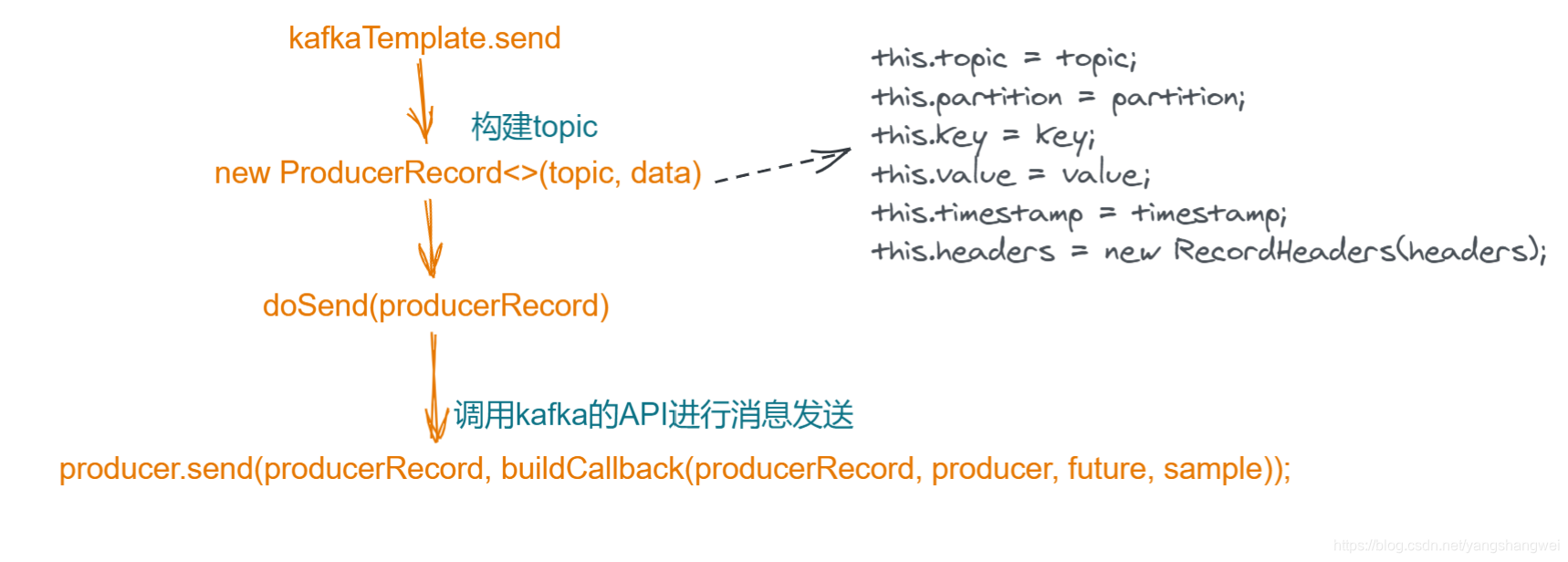

Spring-kafka生产者源码流程

ListenableFuture<SendResult<Object, Object>> result = kafkaTemplate.send(TOPICA.TOPIC, messageMock);

主要的源码流程如下

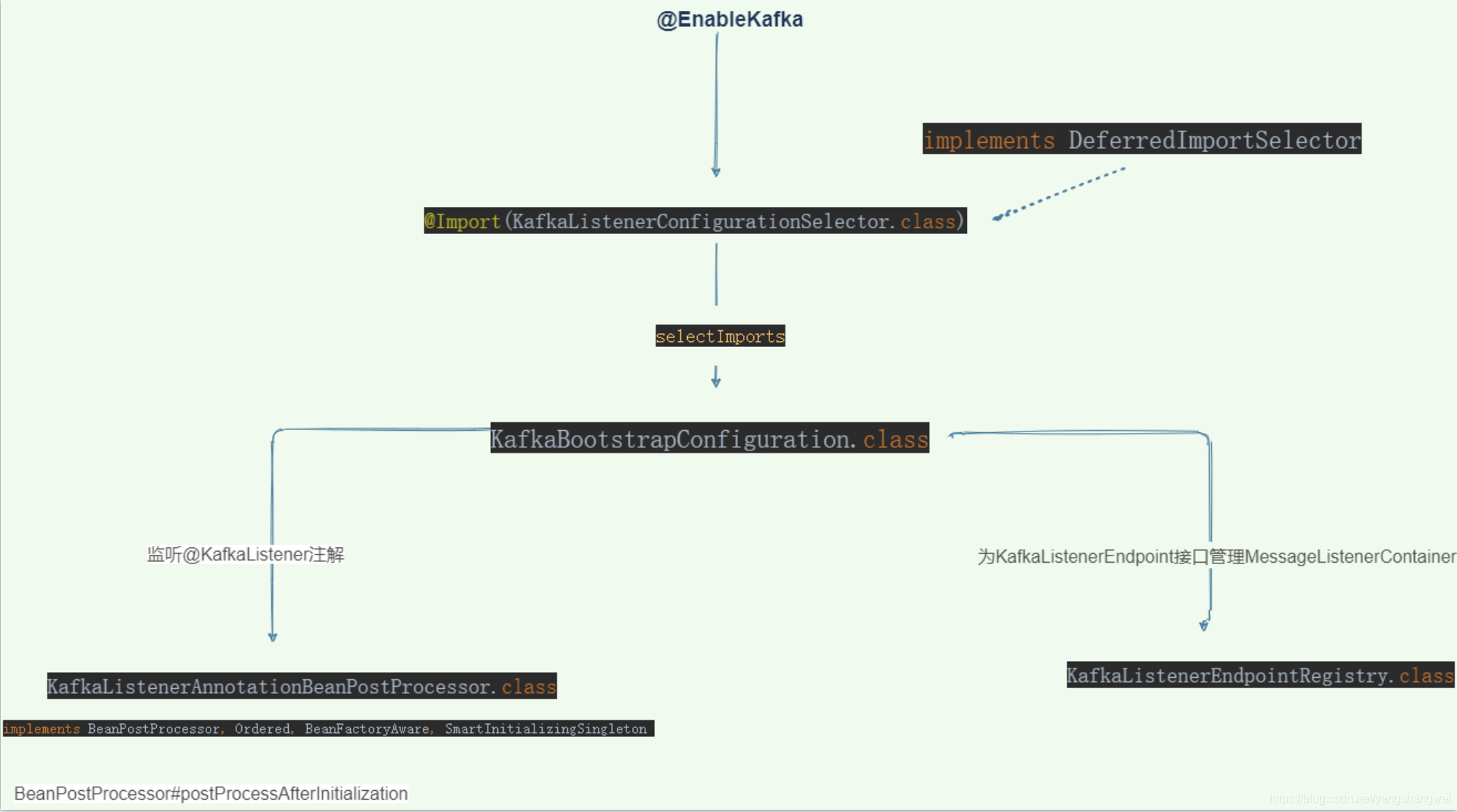

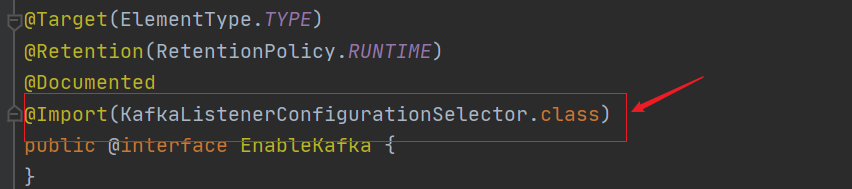

Spring-kafka消费者源码流程(@EnableKafka和@KafkaListener )

消费的话,比较复杂

@KafkaListener(topics = TOPICA.TOPIC ,groupId = CONSUMER_GROUP_PREFIX + TOPICA.TOPIC)

public void onMessage(MessageMock messageMock){

logger.info("【接受到消息][线程:{} 消息内容:{}]", Thread.currentThread().getName(), messageMock);

}

划重点,主要关注

Flow

作为引子,我们继续来梳理下源码

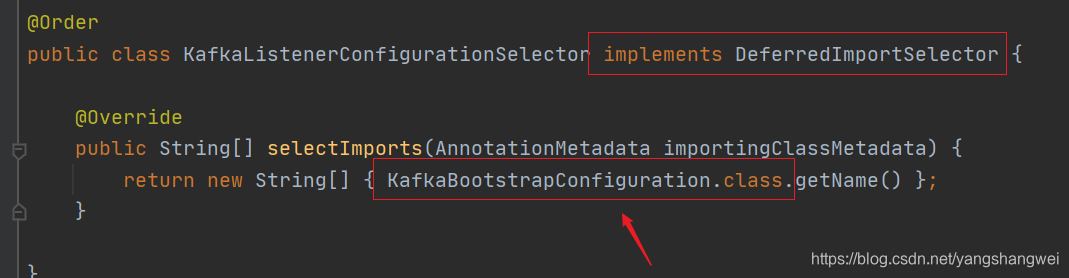

继续

继续

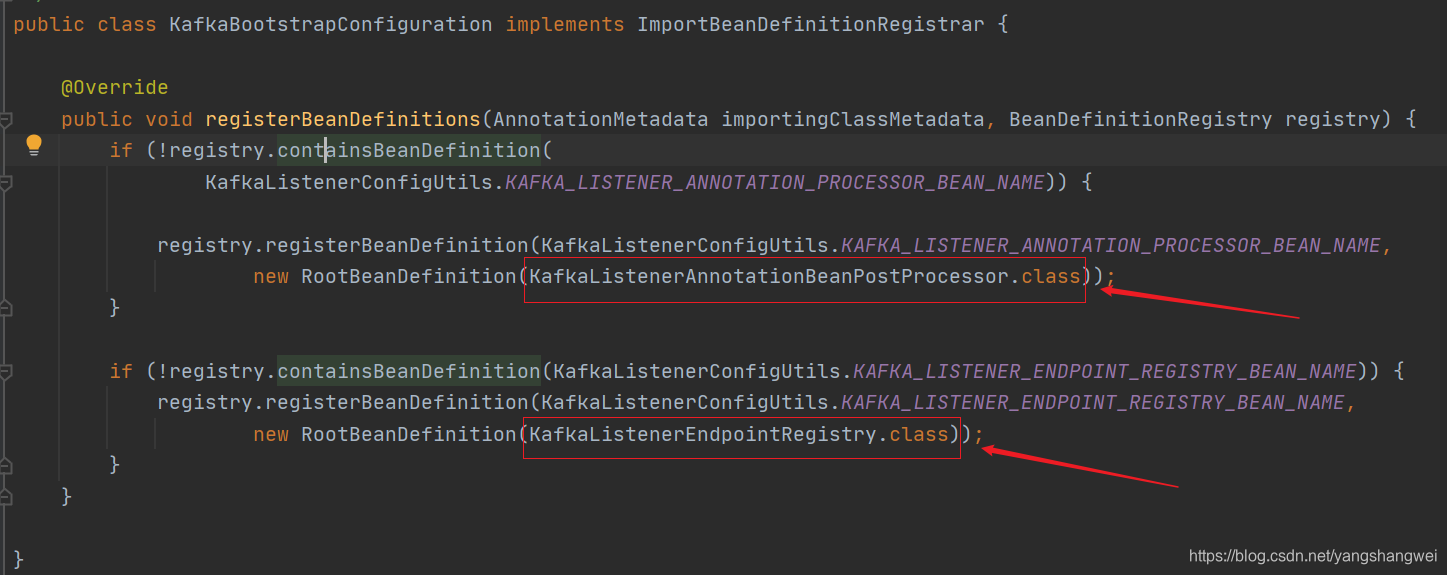

KafkaBootstrapConfiguration的主要功能是创建两个bean

KafkaListenerAnnotationBeanPostProcessor

实现了如下接口

implements BeanPostProcessor, Ordered, BeanFactoryAware, SmartInitializingSingleton

主要功能就是监听@KafkaListener注解 。 bean的后置处理器 需要重写 postProcessAfterInitialization

@Override

public Object postProcessAfterInitialization(final Object bean, final String beanName) throws BeansException {

if (!this.nonAnnotatedClasses.contains(bean.getClass())) {

// 获取对应的class

Class<?> targetClass = AopUtils.getTargetClass(bean);

// 查找类是否有@KafkaListener注解

Collection<KafkaListener> classLevelListeners = findListenerAnnotations(targetClass);

final boolean hasClassLevelListeners = classLevelListeners.size() > 0;

final List<Method> multiMethods = new ArrayList<>();

// 查找类中方法上是否有对应的@KafkaListener注解,

Map<Method, Set<KafkaListener>> annotatedMethods = MethodIntrospector.selectMethods(targetClass,

(MethodIntrospector.MetadataLookup<Set<KafkaListener>>) method -> {

Set<KafkaListener> listenerMethods = findListenerAnnotations(method);

return (!listenerMethods.isEmpty() ? listenerMethods : null);

});

if (hasClassLevelListeners) {

Set<Method> methodsWithHandler = MethodIntrospector.selectMethods(targetClass,

(ReflectionUtils.MethodFilter) method ->

AnnotationUtils.findAnnotation(method, KafkaHandler.class) != null);

multiMethods.addAll(methodsWithHandler);

}

if (annotatedMethods.isEmpty()) {

this.nonAnnotatedClasses.add(bean.getClass());

this.logger.trace(() -> "No @KafkaListener annotations found on bean type: " + bean.getClass());

}

else {

// Non-empty set of methods

for (Map.Entry<Method, Set<KafkaListener>> entry : annotatedMethods.entrySet()) {

Method method = entry.getKey();

for (KafkaListener listener : entry.getValue()) {

// 处理@KafkaListener注解 重点看

processKafkaListener(listener, method, bean, beanName);

}

}

this.logger.debug(() -> annotatedMethods.size() + " @KafkaListener methods processed on bean '"

+ beanName + "': " + annotatedMethods);

}

if (hasClassLevelListeners) {

processMultiMethodListeners(classLevelListeners, multiMethods, bean, beanName);

}

}

return bean;

}

重点方法

protected void processKafkaListener(KafkaListener kafkaListener, Method method, Object bean, String beanName) {

Method methodToUse = checkProxy(method, bean);

MethodKafkaListenerEndpoint<K, V> endpoint = new MethodKafkaListenerEndpoint<>();

endpoint.setMethod(methodToUse);

processListener(endpoint, kafkaListener, bean, methodToUse, beanName);

}

继续 processListener

protected void processListener(MethodKafkaListenerEndpoint<?, ?> endpoint, KafkaListener kafkaListener,

Object bean, Object adminTarget, String beanName) {

String beanRef = kafkaListener.beanRef();

if (StringUtils.hasText(beanRef)) {

this.listenerScope.addListener(beanRef, bean);

}

// 构建 endpoint

endpoint.setBean(bean);

endpoint.setMessageHandlerMethodFactory(this.messageHandlerMethodFactory);

endpoint.setId(getEndpointId(kafkaListener));

endpoint.setGroupId(getEndpointGroupId(kafkaListener, endpoint.getId()));

endpoint.setTopicPartitions(resolveTopicPartitions(kafkaListener));

endpoint.setTopics(resolveTopics(kafkaListener));

endpoint.setTopicPattern(resolvePattern(kafkaListener));

endpoint.setClientIdPrefix(resolveExpressionAsString(kafkaListener.clientIdPrefix(), "clientIdPrefix"));

String group = kafkaListener.containerGroup();

if (StringUtils.hasText(group)) {

Object resolvedGroup = resolveExpression(group);

if (resolvedGroup instanceof String) {

endpoint.setGroup((String) resolvedGroup);

}

}

String concurrency = kafkaListener.concurrency();

if (StringUtils.hasText(concurrency)) {

endpoint.setConcurrency(resolveExpressionAsInteger(concurrency, "concurrency"));

}

String autoStartup = kafkaListener.autoStartup();

if (StringUtils.hasText(autoStartup)) {

endpoint.setAutoStartup(resolveExpressionAsBoolean(autoStartup, "autoStartup"));

}

resolveKafkaProperties(endpoint, kafkaListener.properties());

endpoint.setSplitIterables(kafkaListener.splitIterables());

KafkaListenerContainerFactory<?> factory = null;

String containerFactoryBeanName = resolve(kafkaListener.containerFactory());

if (StringUtils.hasText(containerFactoryBeanName)) {

Assert.state(this.beanFactory != null, "BeanFactory must be set to obtain container factory by bean name");

try {

factory = this.beanFactory.getBean(containerFactoryBeanName, KafkaListenerContainerFactory.class);

}

catch (NoSuchBeanDefinitionException ex) {

throw new BeanInitializationException("Could not register Kafka listener endpoint on [" + adminTarget

+ "] for bean " + beanName + ", no " + KafkaListenerContainerFactory.class.getSimpleName()

+ " with id '" + containerFactoryBeanName + "' was found in the application context", ex);

}

}

endpoint.setBeanFactory(this.beanFactory);

String errorHandlerBeanName = resolveExpressionAsString(kafkaListener.errorHandler(), "errorHandler");

if (StringUtils.hasText(errorHandlerBeanName)) {

endpoint.setErrorHandler(this.beanFactory.getBean(errorHandlerBeanName, KafkaListenerErrorHandler.class));

}

// 将endpoint注册到registrar

this.registrar.registerEndpoint(endpoint, factory);

if (StringUtils.hasText(beanRef)) {

this.listenerScope.removeListener(beanRef);

}

}

继续看 registerEndpoint

public void registerEndpoint(KafkaListenerEndpoint endpoint, KafkaListenerContainerFactory<?> factory) {

Assert.notNull(endpoint, "Endpoint must be set");

Assert.hasText(endpoint.getId(), "Endpoint id must be set");

// Factory may be null, we defer the resolution right before actually creating the container

// 把endpoint封装为KafkaListenerEndpointDescriptor

KafkaListenerEndpointDescriptor descriptor = new KafkaListenerEndpointDescriptor(endpoint, factory);

synchronized (this.endpointDescriptors) {

if (this.startImmediately) {

// Register and start immediately

this.endpointRegistry.registerListenerContainer(descriptor.endpoint,

resolveContainerFactory(descriptor), true);

}

else {

// 将descriptor添加到endpointDescriptors

this.endpointDescriptors.add(descriptor);

}

}

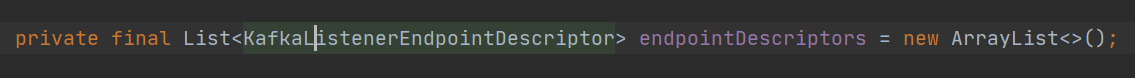

}

总的来看: 得到一个含有KafkaListener基本信息的Endpoint,将Endpoint被封装到KafkaListenerEndpointDescriptor,KafkaListenerEndpointDescriptor被添加到KafkaListenerEndpointRegistrar.endpointDescriptors中,至此这部分的流程结束了,感觉没有下文呀。

KafkaListenerEndpointRegistrar.endpointDescriptors 这个List中的数据怎么用呢?

public class KafkaListenerEndpointRegistrar implements BeanFactoryAware, InitializingBean {

}

KafkaListenerEndpointRegistrar 实现了 InitializingBean 接口,重写 afterPropertiesSet,该方法会在bean实例化完成后执行

@Override

public void afterPropertiesSet() {

registerAllEndpoints();

}

继续 registerAllEndpoints();

protected void registerAllEndpoints() {

synchronized (this.endpointDescriptors) {

// 遍历KafkaListenerEndpointDescriptor

for (KafkaListenerEndpointDescriptor descriptor : this.endpointDescriptors) {

// 注册

this.endpointRegistry.registerListenerContainer(

descriptor.endpoint, resolveContainerFactory(descriptor));

}

this.startImmediately = true; // trigger immediate startup

}

}

继续

public void registerListenerContainer(KafkaListenerEndpoint endpoint, KafkaListenerContainerFactory<?> factory) {

registerListenerContainer(endpoint, factory, false);

}

go

public void registerListenerContainer(KafkaListenerEndpoint endpoint, KafkaListenerContainerFactory<?> factory,

boolean startImmediately) {

Assert.notNull(endpoint, "Endpoint must not be null");

Assert.notNull(factory, "Factory must not be null");

String id = endpoint.getId();

Assert.hasText(id, "Endpoint id must not be empty");

synchronized (this.listenerContainers) {

Assert.state(!this.listenerContainers.containsKey(id),

"Another endpoint is already registered with id '" + id + "'");

// 创建Endpoint对应的MessageListenerContainer,将创建好的MessageListenerContainer放入listenerContainers

MessageListenerContainer container = createListenerContainer(endpoint, factory);

this.listenerContainers.put(id, container);

// 如果KafkaListener注解中有对应的group信息,则将container添加到对应的group中

if (StringUtils.hasText(endpoint.getGroup()) && this.applicationContext != null) {

List<MessageListenerContainer> containerGroup;

if (this.applicationContext.containsBean(endpoint.getGroup())) {

containerGroup = this.applicationContext.getBean(endpoint.getGroup(), List.class);

}

else {

containerGroup = new ArrayList<MessageListenerContainer>();

this.applicationContext.getBeanFactory().registerSingleton(endpoint.getGroup(), containerGroup);

}

containerGroup.add(container);

}

if (startImmediately) {

startIfNecessary(container);

}

}

}

智能推荐

《第一行代码》(第二版)广播的问题及其解决_代码里的广播错误-程序员宅基地

文章浏览阅读2.6k次,点赞5次,收藏13次。1)5.2.1弹出两次已连接或者未连接这是因为你同时打开了流量和WiFi,他就会发出两次广播。2)5.3.1中发送自定义广播问题标准广播未能弹出消息:Intent intent=new Intent("com.example.broadcasttest.MY_BROADCAST");sendBroadcast(intent);上述已经失效了。修改:Intent intent=new Intent("com.example.broadcasttest...._代码里的广播错误

K8s 学习者绝对不能错过的最全知识图谱(内含 58个知识点链接)-程序员宅基地

文章浏览阅读249次。作者 |平名 阿里服务端开发技术专家导读:Kubernetes 作为云原生时代的“操作系统”,熟悉和使用它是每名用户的必备技能。本篇文章概述了容器服务 Kubernet..._k8知识库

TencentOS3.1安装PHP+Nginx+redis测试系统_tencentos-3.1-程序员宅基地

文章浏览阅读923次。分别是etc/pear.conf,etc/php-fpm.conf, etc/php-fpm.d/www.conf,lib/php.ini。php8安装基本一致,因为一个服务期内有2个版本,所以注意修改不同的安装目录和端口号。可以直接使用sbin下的nginx命令启动服务。完成编译安装需要gcc支持,如果没有,使用如下命令安装。安装过程基本一致,下面是安装7.1.33的步骤。执行如下命令,检查已经安装的包和可安装的包。执行如下命令,检查已经安装的包和可安装的包。执行如下命令,检查已经安装的包和可安装的包。_tencentos-3.1

urllib.request.urlopen()基本使用_urllib.request.urlopen(url)-程序员宅基地

文章浏览阅读3.1w次,点赞21次,收藏75次。import urllib.requesturl = 'https://www.python.org'# 方式一response = urllib.request.urlopen(url)print(type(response)) # <class 'http.client.HTTPResponse'># 方式二request = urllib.request.Req..._urllib.request.urlopen(url)

如何用ChatGPT+GEE+ENVI+Python进行高光谱,多光谱成像遥感数据处理?-程序员宅基地

文章浏览阅读1.5k次,点赞12次,收藏15次。如何用ChatGPT+GEE+ENVI+Python进行高光谱,多光谱成像遥感数据处理?

RS485总线常识_rs485 差分走綫間距-程序员宅基地

文章浏览阅读1.2k次。RS485总线常识 2010-10-12 15:56:36| 分类: 知识储备 | 标签:rs485 总线 传输 差分 |字号大中小 订阅RS485总线RS485采用平衡发送和差分接收方式实现通信:发送端将串行口的TTL电平信号转换成差分信号A,B两路输出,经过线缆传输之后在接收端将差分信号还原成TTL电平信号。由于传输线通常使用双绞线,又是差分传输,所_rs485 差分走綫間距

随便推点

移植、制作uboot、Linux(一)_uboot制作-程序员宅基地

文章浏览阅读621次。u-boot、linux烧录_uboot制作

windows下安装git和gitbash安装教程_64-bit git for windows setup.-程序员宅基地

文章浏览阅读1.2w次,点赞10次,收藏44次。windos上git安装,git bash安装_64-bit git for windows setup.

环形链表(算法java)_java 实现环形链表-程序员宅基地

文章浏览阅读196次。环形链表(算法java)的两种解决方法_java 实现环形链表

docker部署Airflow(修改URL-path、更换postgres -->myslq数据库、LDAP登录)_airflow docker-程序员宅基地

文章浏览阅读5.7k次。Airflow什么是 Airflow?Airflow 的架构Airflow 解决哪些问题一、docker-compose 安装airflow(postgres)1、创建启动文件airflow-docker-compose.yml.1.1、添加挂载卷,需要修改airflow-docker-compose.yml的位置2、创建本地配置文件airflow.cfg2.1、如果想修改WEB URL地址,需要修改airflow.cfg中以下两个地方3、之后up -d直接启动即可web访问地址:二、存储数据库更换post_airflow docker

计算机毕业设计springboot高校教务管理系统532k79【附源码+数据库+部署+LW】-程序员宅基地

文章浏览阅读28次。选题背景:随着社会的发展和教育的普及,高校教务管理系统在现代高等教育中扮演着至关重要的角色。传统的手工管理方式已经无法满足高校日益增长的规模和复杂的管理需求。因此,开发一套高效、智能的教务管理系统成为了当今高校管理的迫切需求。选题意义:高校教务管理系统的开发具有重要的意义和价值。首先,它可以提高高校教务管理的效率和准确性。通过自动化处理学生选课、排课、考试安排等繁琐的事务,大大减轻了教务人员的工作负担,提高了工作效率。同时,系统可以实时更新学生信息和课程信息,减少了数据错误和冗余,保证了管理的准确性

javaint接收float_Java Integer转换double,float,int,long,string-程序员宅基地

文章浏览阅读132次。首页>基础教程>常用类>常用 Integer类Java Integer转换double,float,int,long,stringjava中Integer类可以很方便的转换成double,float,int,long,string等类型,都有固定的方法进行转换。方法double doubleValue() //以 double 类型返回该 Integer 的值。flo..._java integet接收float类型的参数