2016:DianNao Family Energy-Efficient Hardware Accelerators for Machine Learning-程序员宅基地

技术标签: pr

文章目录

- 这个发表在了

- https://cacm.acm.org/

- communication of the ACM

- 上

- 参考文献链接是

Chen Y , Chen T , Xu Z , et al.

DianNao Family: Energy-Efficient Hardware Accelerators for Machine Learning[J].

Communications of the Acm, 2016, 59(11):105-112

- https://cacm.acm.org/magazines/2016/11/209123-diannao-family/fulltext

- 果然找到了他

- 特码的,我下载了,6不6

The original version of this paper is entitled “DianNao: A

Small-Footprint, High-Throughput Accelerator for Ubiq-

uitous Machine Learning” and was published in Proceed-

ings of the International Conference on Architectural Support

for Programming Languages and Operating Systems (ASPLOS)

49, 4 (March 2014), ACM, New York, NY, 269–284.

Abstract

- ML pervasive

- broad range of applications

- broad range of systems(embedded to data centers)

- broad range of applications

- computer

- toward heterogeneous multi-cores

- a mix of cores and hardware accelerators,

- designing hardware accelerators for ML

- achieve high efficiency and broad application scope

第二段

- efficient computational primitives

- important for a hardware accelerator,

- inefficient memory transfers can

- potentially void the throughput, energy, or cost advantages of accelerators,

- an Amdahl’s law effect

- become a first-order concern,

- like in processors,

- rather than an element factored in accelerator design on a second step

- a series of hardware accelerators

- designed for ML(nn),

- the impact of memory on accelerator design, performance, and energy.

- representative neural network layers

- 450.65x over GPU

- energy by 150.31x on average

- for 64-chip DaDianNao (a member of the DianNao family)

1 INTRODUCTION

- designing hardware accelerators which realize the best possible tradeoff between flexibility and efficiency is becoming a prominent

issue.

- The first question is for which category of applications one should primarily design accelerators?

- Together with the architecture trend towards accelerators, a second simultaneous and significant trend in high-performance and embedded applications is developing: many of the emerging high-performance and embedded applications, from image/video/audio recognition to automatic translation, business analytics, and robotics rely on machine learning

techniques. - This trend in application comes together with a third trend in machine learning (ML) where a small number

of techniques, based on neural networks (especially deep learning techniques 16, 26 ), have been proved in the past few

years to be state-of-the-art across a broad range of applications. - As a result, there is a unique opportunity to design accelerators having significant application scope as well as

high performance and efficiency. 4

第二段

- Currently, ML workloads

- mostly executed on

- multicores using SIMD[44]

- on GPUs[7]

- or on FPGAs[2]

- the aforementioned trends

- have already been identified

- by researchers who have proposed accelerators implementing,

- CNNs[2]

- Multi-Layer Perceptrons [43] ;

- accelerators focusing on other domains,

- image processing,

- propose efficient implementations of some of the computational primitives used

- by machine-learning techniques, such as convolutions[37]

- There are also ASIC implementations of ML

- such as Support Vector Machine and CNNs.

- these works focused on

- efficiently implementing the computational primitives

- ignore memory transfers for the sake of simplicity[37,43]

- plug their computational accelerator to memory via a more or less sophisticated DMA. [2,12,19]

- efficiently implementing the computational primitives

第三段

-

While efficient implementation of computational primitives is a first and important step with promising results,

inefficient memory transfers can potentially void the throughput, energy, or cost advantages of accelerators, that is, an

Amdahl’s law effect, and thus, they should become a first-

order concern, just like in processors, rather than an element

factored in accelerator design on a second step. -

Unlike in processors though, one can factor in the specific nature of

memory transfers in target algorithms, just like it is done for accelerating computations. -

This is especially important in the domain of ML where there is a clear trend towards scaling up the size of learning models in order to achieve better accuracy and more functionality. 16, 24

第四段

- In this article, we introduce a series of hardware accelerators designed for ML (especially neural networks), including

DianNao, DaDianNao, ShiDianNao, and PuDianNao as listed in Table 1. - We focus our study on memory usage, and we investigate the accelerator architecture to minimize memory

transfers and to perform them as efficiently as possible.

2 DIANNAO: A NN ACCELERATOR

- DianNao

- first of DianNao accelerator family,

- accommodates sota nn techniques (dl ),

- inherits the broad application scope of nn.

2.1 Architecture

- DianNao

- input buffer for input (NBin)

- output buffer for output (NBout)

- buffer for synaptic(突触) weights (SB)

- connected to a computational block (performing both synapses and neurons computations)

- NFU, and CP, see Figure 1

NBin是存放输入神经元

SB是存放突触的权重的

这个NBout是存放输出神经元

我觉得图示的可以这样理解:2个输入神经元,2个突触,将这2个对应乘起来,输出是1个神经元啊。但是我的这个NFU牛逼啊,他可以一次性求两个输出神经元。

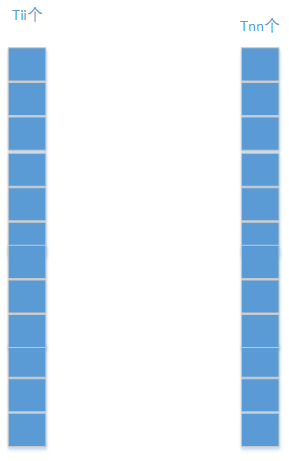

NFU

- a functional block of T i T_i Ti inputs/synapses(突触)

- T n T_n Tn output neurons,

- time-shared by different algorithmic blocks of neurons.

这个NFU对 T i T_i Ti个输入和突触运算,得到 T n T_n Tn个输出神经元,突触不是应该是 T i × T n T_i\times T_n Ti×Tn个吗??,

- Depending on the layer type,

- computations at the NFU can be decomposed in either two or three stages

- For classifier and convolutional:

- multiplication of synapses × \times × inputs:NFU-1

- , additions of all multiplications, :NFU-2

- sigmoid. :NFU-3

如果是分类层或者卷积的话的话,那就是简单的突触 × \times × 输入,然后加起来,求sigmoid。这个我能理解哦,这种情况不就是卷积吗。

如果是分类层,那么输入就是

- last stage (sigmoid or another nonlinear function) can vary.

- For pooling, no multiplication(no synapse),

- pooling can be average or max.

- adders(加法器) have multiple inputs,

- they are in fact adder trees,

- the second stage also contains

- shifters and max operators for pooling.

要啥移位啊??

- the sigmoid function (for classifier and convolutional layers)can be efficiently implemented using ( f ( x ) = a i x × + b i , x ∈ [ x i , x i + 1 ] f(x) = a_i x \times + b_i , x \in [x_i , x_{i+1} ] f(x)=aix×+bi,x∈[xi,xi+1]) (16 segments are sufficient)

On-chip Storage

- on-chip storage structures of DianNao

- can be construed as modified buffers of scratchpads.

- While a cache is an excellent storage structure for a general-purpose processor, it is a sub-optimal way to exploit reuse because of the cache access overhead (tag check, associativity, line size, speculative read, etc.) and cache conflicts.

- The efficient alternative, scratchpad, is used in VLIW processors but it is known to be very difficult to compile for.

- However a scratchpad in a dedicated accelerator realizes the best of both worlds: efficient

storage, and both efficient and easy exploitation of locality because only a few algorithms have to be manually adapted.

第二段

- on-chip storage into three (NBin, NBout,and SB), because there are three type of data (input neurons,output neurons and synapses) with different characteristics (read width and reuse distance).

- The first benefit of splitting structures is to tailor the SRAMs to the appropriate

read/write width, - and the second benefit of splitting storage structures is to avoid conflicts, as would occur in a cache.

- Moreover, we implement three DMAs to exploit spatial locality of data, one for each buffer (two load DMAs for inputs, one store DMA for outputs).

2.2 Loop tiling

- DianNao 用 loop tiling去减少memory access

- so可容纳大的神经网络

- 举例

- 一个classifier 层

- 有 N n N_n Nn输出神经元

- 全连接到 N i N_i Ni的输入

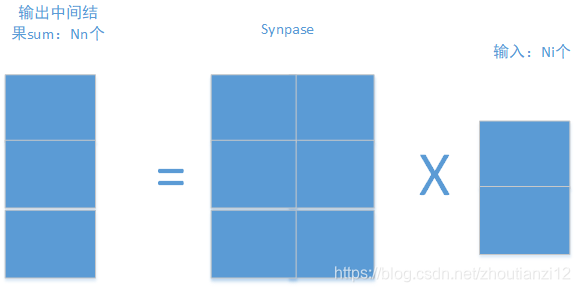

- 如下图

- 一个classifier 层

N n N_n Nn个输出, N i N_i Ni个输入,sypase应该是 N n × N i N_n\times N_i Nn×Ni大小,用这个矩阵 × N i \times N_i ×Ni即可得到结果啊

- 先取出来一块

- 有点疑惑啊

- 万一右边第一个元素和左边全部元素都有关

- 你咋算啊 ()

- 其实啊,我他妈算右边第一个时候

- 只需要用到和synapse的一行呀!

- 那你那个大大的synapse矩阵咋办啊

- 下面是原始代码和和

- tiled代码

- 他把分类层映射到DianNao

for(int n=0;n<Nn;n++)

sum[n]=0;

for(int n=0;n<Nn;n++) //输出神经元

for(int i=0;i<Ni;i++) //输入神经元

sum[n]+=synapse[n][i]*neuron[i];

for(int n=0;n<Nn;n++)

neuron[n]=Sigmoid(sum[n]);

- 俺的想法:

- 一次来Tnn个输出

- 和Tii个输入

- 然后这个东西对于硬件还是太大了

- 再拆

- 来Tn个和Ti个吧

- 就酱

for(int nnn=0;nnn<Nn;nnn+=Tnn){

//tiling for output 神经元

//第一个for循环准备扔出去Tnn个输出

for(int iii=0;iii<Ni;iii+=Tii){

//tiling for input 神经元

//第二个for循环准备扔进来Tii个输入

//下面就这两个东西动手

for(int nn=nnn;nn<nnn+Tnn;nn+=Tn){

//第三个for循环觉得觉得Tnn还是太大了,继续拆

//大小是Tn

//那么我们对每一个Tnn块!(开始位置是nn哦!!)

//我们如下求解

///

for(int n=nn;n<nn+Tn;n++)

//第一步把中间结果全部搞成零!

sum[n]=0;

//为求sum[n],sum[n]=synapse的第n行乘neuron的全部啊!

for(int ii=iii;ii<iii+Tii;ii+=Ti)

//上面的for是对Ti进行拆

for(int n=nn;n<nn+Tn;n++)

for(int i=ii;i<ii+Ti;i++)

sum[n]+=synapse[n][i]*neuron[i];

for(int nn=nnn;nn<nnn+Tnn;nn+=Tn)

neuron[n]=sigmoid(sum[n]);

///

} } }

- 在tiled代码中, i i ii ii和 n n nn nn

- 表示NFU有 T i T_i Ti个输入和突触

- 和 T n T_n Tn个输出神经元

- 表示NFU有 T i T_i Ti个输入和突触

- 输入神经元被每个输出神经元需要重用

- 但这个输入向量也太他妈大了

- 塞不到Nbin块里啊

- 所以也要对循环 i i ii ii分块,因子 T i i T_{ii} Tii

上面的代码肯定有问题,正确的如下:

for (int nnn = 0; nnn < Nn; nnn += Tnn) {

for (int nn = nnn; nn < nnn + Tnn; nn += Tn) {

for (int n = nn; n < nn + Tn; n++)

sum[n] = 0;

for (int iii = 0; iii < Ni; iii += Tii) {

for (int ii = iii; ii < iii + Tii; ii += Ti)

for (int n = nn; n < nn + Tn; n++)

for (int i = ii; i < ii + Ti; i++)

sum[n] += synapse[n][i] * neuron[i];

}

for (int n = nn; n < nn + Tn; n++)

printf("s%ds ", sum[n]);

}

}

for (int index = 0; index < Nn; index++)

printf("%d ", sum[index]);

智能推荐

数据库安全:Hadoop 未授权访问-命令执行漏洞._hadoop未授权访问-程序员宅基地

文章浏览阅读2.9k次,点赞3次,收藏4次。Hadoop 未授权访问主要因HadoopYARN资源管理系统配置不当,导致可以未经授权进行访问,从而被攻击者恶意利用。攻击者无需认证即可通过RESTAPI部署任务来执行任意指令,最终完全控制服务器。_hadoop未授权访问

100个替代昂贵商业软件的开源应用_citadel开源中文版本-程序员宅基地

文章浏览阅读4.1k次,点赞3次,收藏18次。100个替代昂贵商业软件的开源应用面对大,中,小企业和家庭用户,立竿见影显著降低成本的开源软件。某些商业软件素以昂贵著称。随着云计算的日益普及,很多常用软件包供应商将一次性收费改为月租模式。虽然月租费貌似便宜,但也经不起长时间的累积。100个替代昂贵商业软件的开源应用尽管有许多好理由,但避免或减少使用费,仍然是许多用户看中开源应用软件的主要因素。基于这一点,我们更新了可替代_citadel开源中文版本

竞选计算机协会网络部部长,2019年计算机协会部长竞选演讲稿-程序员宅基地

文章浏览阅读55次。2019年计算机协会部长竞选演讲稿篇一:计算机协会部长竞选演讲稿尊敬的领导,敬爱的老师,亲爱的同学们:大家晚上好!俗话说:马只有驰骋千里,方知其是否为良驹;人只有通过竞争,才能知其是否为栋梁。我是来自xxx班的伍朝海,今晚,我很荣幸能够站在这里参加这次学生会的竞选,职位是xx系的宣传窗口——新闻网络部的负责人。我知道,今晚竞选的不仅仅是个职位,也是在竞选一个为同学们服务的机会,更是在竞选一个为我们...

ipython和jupyter notebook_第02章 Python语法基础,IPython和Jupyter Notebook-程序员宅基地

文章浏览阅读185次。第2章 Python语法基础,IPython和Jupyter Notebooks当我在2011年和2012年写作本书的第一版时,可用的学习Python数据分析的资源很少。这部分上是一个鸡和蛋的问题:我们现在使用的库,比如pandas、scikit-learn和statsmodels,那时相对来说并不成熟。2017年,数据科学、数据分析和机器学习的资源已经很多,原来通用的科学计算拓展到了计算机科学家..._jupyter notebook if后面有多个条件

雪花算法生成的ID精度丢失问题_雪花算法生成id精度丢失-程序员宅基地

文章浏览阅读494次,点赞2次,收藏2次。雪花算法生成的ID精度丢失问题雪花算法ID精度丢失_雪花算法生成id精度丢失

Sublime Text关闭更新(亲测可用)_sublime关闭更新检测-程序员宅基地

文章浏览阅读2.2k次。sumlime text关闭自动更新_sublime关闭更新检测

随便推点

文本文件数据输入与读取_文本输入读取-程序员宅基地

文章浏览阅读654次。步骤1两个Edittext用来作为输入和获取的媒介<android.support.constraint.ConstraintLayout ="http://schemas.android.com/apk/res/android" xmlns:app="http://schemas.android.com/apk/res-auto" xmlns:to..._文本输入读取

极速进化,光速转录,C++版本人工智能实时语音转文字(字幕/语音识别)Whisper.cpp实践_c++语音识别库-程序员宅基地

文章浏览阅读2.8k次,点赞3次,收藏8次。业界良心OpenAI开源的[Whisper模型](https://v3u.cn/a_id_272)是开源语音转文字领域的执牛耳者,白璧微瑕之处在于无法通过苹果M芯片优化转录效率,Whisper.cpp 则是 Whisper 模型的 C/C++ 移植版本,它具有无依赖项、内存使用量低等特点,重要的是增加了 Core ML 支持,完美适配苹果M系列芯片。 _c++语音识别库

前端(vue)导出word文档(导出图片)_前端批量docx转jpg-程序员宅基地

文章浏览阅读688次,点赞7次,收藏11次。导出word文档方法有很多,但这次要导出图片,所以选用了html-docxhtml-docx是根据html代码进行导出........_前端批量docx转jpg

TaiShan 200服务器安装Ubuntu 18.04_ubuntu登录华为泰山服务器.-程序员宅基地

文章浏览阅读749次。TaiShan 200 服务器 Ubuntu 18.04 安装指南, amr64,aarch64_ubuntu登录华为泰山服务器.

linux openerp,openerp-程序员宅基地

文章浏览阅读101次。实验环境centos7_x64实验软件odoo_8.0.20170101.noarch.rpm软件安装yum install -y /root/odoo_8.0.20170101.noarch.rpmyum install -y yum-utils postgresql postgresql-server postgresql-libspostgresql-setup initdbsu - po..._openerp中文版 for liunx

STM32+AS608指纹模块串口通讯_as068-程序员宅基地

文章浏览阅读2.1w次,点赞53次,收藏263次。STM32+AS08指纹模块串口通讯一. 使用硬件:stm32F103 -mini stm32开发板+AS608指纹模块+usb转串口实物图:硬件接线:注意:usb转串口线是连接串口1即PA9,PA10引脚的,并接上VCC、GND提供电源二. AS068工作流程:As068模块驱动采用的是正点原子公司提供的As068.c及As068.h文件,具体..._as068