日志管理工具总览

先看看 推荐!国外程序员整理的系统管理员资源大全 中,国外程序员整理的日志聚合工具的列表:

日志管理工具:收集,解析,可视化

- Elasticsearch - 一个基于Lucene的文档存储,主要用于日志索引、存储和分析。

- Fluentd - 日志收集和发出

- Flume -分布式日志收集和聚合系统

- Graylog2 -具有报警选项的可插入日志和事件分析服务器

- Heka -流处理系统,可用于日志聚合

- Kibana - 可视化日志和时间戳数据

- Logstash -管理事件和日志的工具

- Octopussy -日志管理解决方案(可视化/报警/报告)

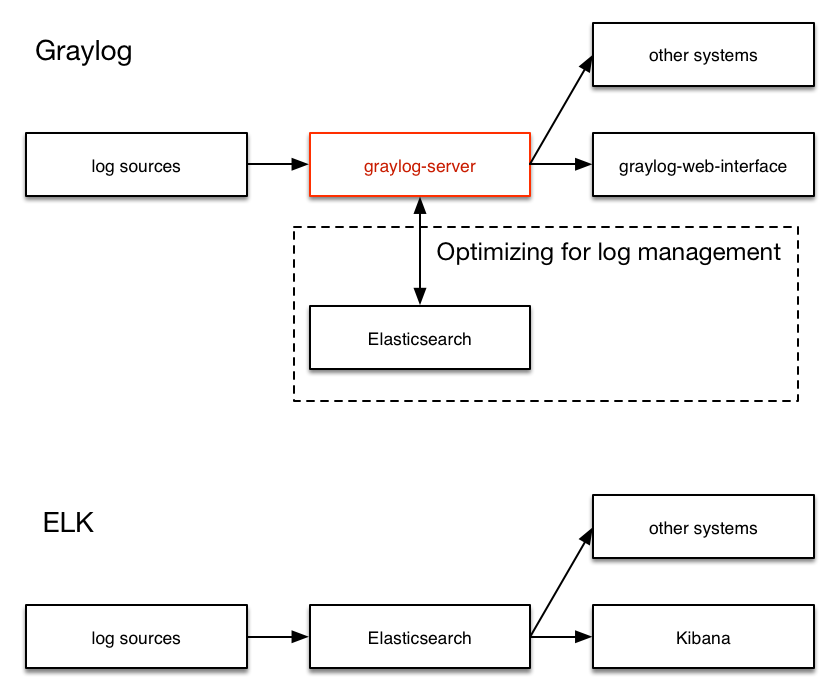

Graylog与ELK方案的对比

- ELK: Logstash -> Elasticsearch -> Kibana

- Graylog: Graylog Collector -> Graylog Server(封装Elasticsearch) -> Graylog Web

之前试过Flunted + Elasticsearch + Kibana的方案,发现有几个缺点:

- 不能处理多行日志,比如Mysql慢查询,Tomcat/Jetty应用的Java异常打印

- 不能保留原始日志,只能把原始日志分字段保存,这样搜索日志结果是一堆Json格式文本,无法阅读。

- 不复合正则表达式匹配的日志行,被全部丢弃。

本着解决以上3个缺点的原则,再次寻找替代方案。 首先找到了商业日志工具Splunk,号称日志界的Google,意思是全文搜索日志的能力,不光能解决以上3个缺点,还提供搜索单词高亮显示,不同错误级别日志标色等吸引人的特性,但是免费版有500M限制,付费版据说要3万美刀,只能放弃,继续寻找。 最后找到了Graylog,第一眼看到Graylog,只是系统日志syslog的采集工具,一点也没吸引到我。但后来深入了解后,才发现Graylog简直就是开源版的Splunk。 我自己总结的Graylog吸引人的地方:

- 一体化方案,安装方便,不像ELK有3个独立系统间的集成问题。

- 采集原始日志,并可以事后再添加字段,比如http_status_code,response_time等等。

- 自己开发采集日志的脚本,并用curl/nc发送到Graylog Server,发送格式是自定义的GELF,Flunted和Logstash都有相应的输出GELF消息的插件。自己开发带来很大的自由度。实际上只需要用inotifywait监控日志的modify事件,并把日志的新增行用curl/netcat发送到Graylog Server就可。

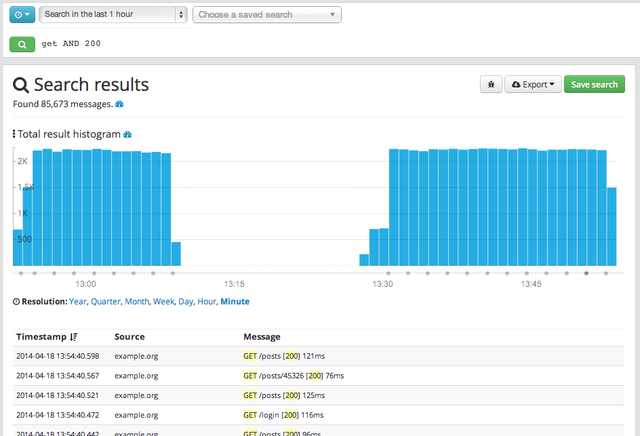

- 搜索结果高亮显示,就像google一样。

- 搜索语法简单,比如:

source:mongo AND reponse_time_ms:>5000,避免直接输入elasticsearch搜索json语法 - 搜索条件可以导出为elasticsearch的搜索json文本,方便直接开发调用elasticsearch rest api的搜索脚本。

Graylog图解

Graylog开源版官网: https://www.graylog.org/

来几张官网的截图:

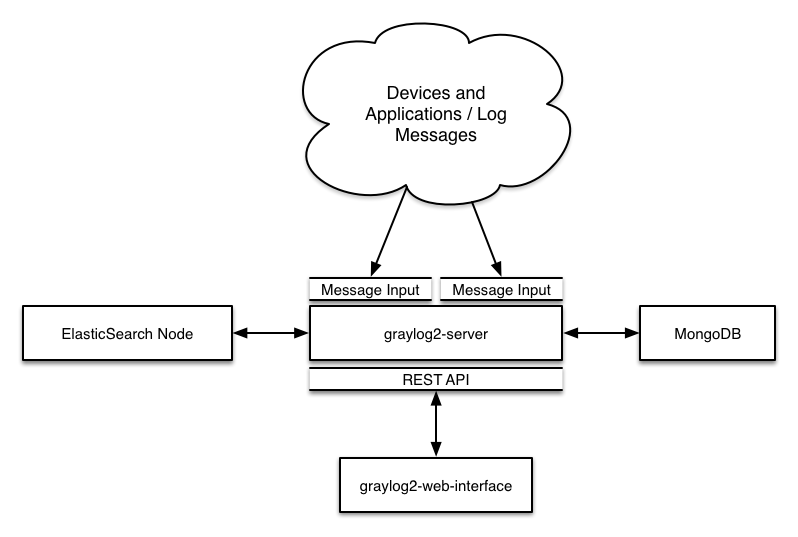

1.架构图

2.屏幕截图

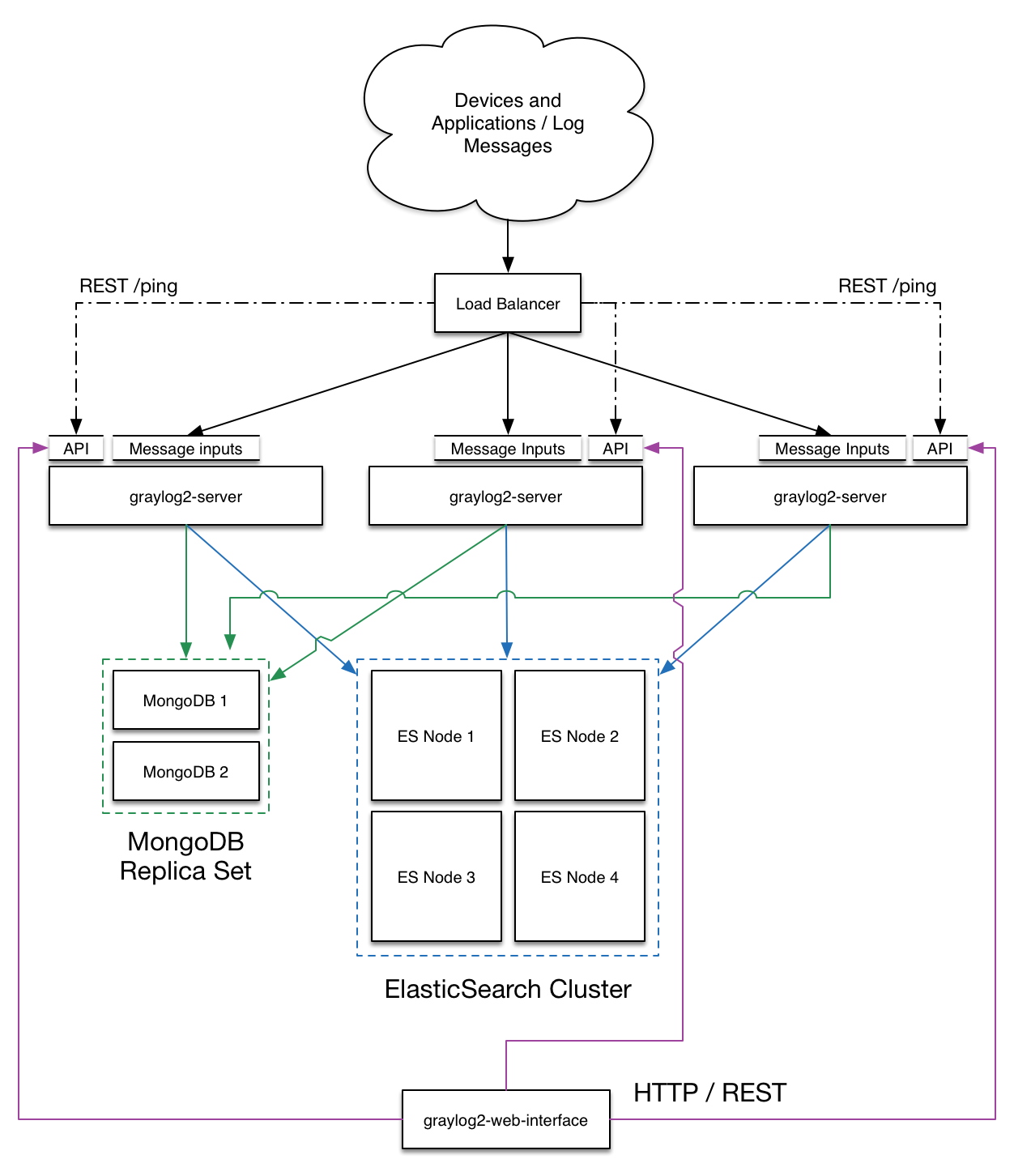

3.部署图

最小安装:

生产环境安装:

Graylog服务器安装

包括四块内容:

- mongodb

- elasticsearch

- graylog-server

- graylog-web

以下环境是CentOS 6.6,服务器ip是10.0.0.11,已安装jre-1.7.0-openjdk

- mongodb

http://docs.mongodb.org/manual/tutorial/install-mongodb-on-red-hat

[root@logserver yum.repos.d]# vim /etc/yum.repos.d/mongodb-org-3.0.repo

---

[mongodb-org-3.0]

name=MongoDB Repository

baseurl=http://repo.mongodb.org/yum/redhat/$releasever/mongodb-org/3.0/x86_64/

gpgcheck=0

enabled=1

---

[root@logserver yum.repos.d]# yum install -y mongodb-org

[root@logserver yum.repos.d]# vi /etc/yum.conf

最后一行添加:

---

exclude=mongodb-org,mongodb-org-server,mongodb-org-shell,mongodb-org-mongos,mongodb-org-tools

---

[root@logserver yum.repos.d]# service mongod start

[root@logserver yum.repos.d]# chkconfig mongod on

[root@logserver yum.repos.d]# vi /etc/security/limits.conf

最后一行添加:

---

* soft nproc 65536

* hard nproc 65536

mongod soft nproc 65536

* soft nofile 131072

* hard nofile 131072

---

[root@logserver ~]# vi /etc/init.d/mongod

ulimit -f unlimited 行前插入:

---

if test -f /sys/kernel/mm/transparent_hugepage/enabled; then

echo never > /sys/kernel/mm/transparent_hugepage/enabled

fi

if test -f /sys/kernel/mm/transparent_hugepage/defrag; then

echo never > /sys/kernel/mm/transparent_hugepage/defrag

fi

---

[root@logserver ~]# /etc/init.d/mongod restart

- elasticsearch

Elasticsearch的最新版是1.6.0

https://www.elastic.co/guide/en/elasticsearch/reference/current/setup-repositories.html

[root@logserver ~]# rpm --import https://packages.elastic.co/GPG-KEY-elasticsearch

[root@logserver ~]# vi /etc/yum.repos.d/elasticsearch.repo

---

[elasticsearch-1.5]

name=Elasticsearch repository for 1.5.x packages

baseurl=http://packages.elastic.co/elasticsearch/1.5/centos

gpgcheck=1

gpgkey=http://packages.elastic.co/GPG-KEY-elasticsearch

enabled=1

---

[root@logserver ~]# yum install elasticsearch

[root@logserver ~]# chkconfig --add elasticsearch

[root@logserver ~]# vi /etc/elasticsearch/elasticsearch.yml

32 cluster.name: graylog

[root@logserver ~]# /etc/init.d/elasticsearch start

[root@logserver ~]# curl localhost:9200

- graylog

Graylog的最新版是1.1.4,下载链接如下:

https://packages.graylog2.org/repo/el/6Server/1.1/x86_64/graylog-server-1.1.4-1.noarch.rpm

https://packages.graylog2.org/repo/el/6Server/1.1/x86_64/graylog-web-1.1.4-1.noarch.rpm

[root@logserver ~]# wget https://packages.graylog2.org/repo/el/6Server/1.0/x86_64/graylog-server-1.0.2-1.noarch.rpm

[root@logserver ~]# wget https://packages.graylog2.org/repo/el/6Server/1.0/x86_64/graylog-web-1.0.2-1.noarch.rpm

[root@logserver ~]# rpm -ivh graylog-server-1.0.2-1.noarch.rpm

[root@logserver ~]# rpm -ivh graylog-web-1.0.2-1.noarch.rpm

[root@logserver ~]# /etc/init.d/graylog-server start

Starting graylog-server: [确定]

启动失败!

[root@logserver ~]# cat /var/log/graylog-server/server.log

2015-05-22T15:53:14.962+08:00 INFO [CmdLineTool] Loaded plugins: []

2015-05-22T15:53:15.032+08:00 ERROR [Server] No password secret set. Please define password_secret in your graylog2.conf.

2015-05-22T15:53:15.033+08:00 ERROR [CmdLineTool] Validating configuration file failed - exiting.

[root@logserver ~]# yum install pwgen

[root@logserver ~]# pwgen -N 1 -s 96

zzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzz

[root@logserver ~]# echo -n 123456 | sha256sum

xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx -

[root@logserver ~]# vi /etc/graylog/server/server.conf

11 password_secret = zzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzz

...

22 root_password_sha2 = xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx

...

152 elasticsearch_cluster_name = graylog

[root@logserver ~]# /etc/init.d/graylog-server restart

启动成功!

[root@logserver ~]# /etc/init.d/graylog-web start

Starting graylog-web: [确定]

启动失败!

[root@logserver ~]# cat /var/log/graylog-web/application.log

2015-05-22T15:53:22.960+08:00 - [ERROR] - from lib.Global in main

Please configure application.secret in your conf/graylog-web-interface.conf

2015-05-22T16:25:55.343+08:00 - [ERROR] - from lib.Global in main

Please configure application.secret in your conf/graylog-web-interface.conf

[root@logserver ~]# pwgen -N 1 -s 96

yyyyyyyyyyyyyyyyyyyyyyyyyyyyyyyyyyyy

[root@logserver ~]# vi /etc/graylog/web/web.conf

---

2 graylog2-server.uris="http://127.0.0.1:12900/"

12 application.secret="yyyyyyyyyyyyyyyyyyyyyyyyyyyyyyyyyyyy"

---

注意:/etc/graylog/web/web.conf中的graylog2-server.uris值必须与/etc/graylog/server/server.conf中的rest_listen_uri一致

---

36 rest_listen_uri = http://127.0.0.1:12900/

---

[root@logserver ~]# /etc/init.d/graylog-web restart

浏览器中输入url: http://10.0.0.11:9000/ 可以进入graylog登录页, 管理员帐号/密码: admin/123456

- 添加日志收集器

以admin登录http://10.0.0.11:9000/

4.1 进入 System > Inputs > Inputs in Cluster > Raw/Plaintext TCP | Launch new input 取名"tcp 5555" 完成创建

任何安装nc的Linux机器上执行:

echo `date` | nc 10.0.0.11 5555

浏览器的http://10.0.0.11:9000/登录后首页,点击第三行绿色搜索按钮,看到一条新消息:

Timestamp Source Message

2015-05-22 08:49:15.280 10.0.0.157 2015年 05月 22日 星期五 16:48:28 CST

说明安装已成功!!

4.2 进入 System > Inputs > Inputs in Cluster > GELF HTTP | Launch new input 取名"http 12201" 完成创建 任何安装curl的Linux机器上执行:

curl -XPOST http://10.0.0.11:12201/gelf -p0 -d '{"short_message":"Hello there", "host":"example.org", "facility":"test", "_foo":"bar"}'

浏览器的http://10.0.0.11:9000/登录后首页,点击第三行绿色搜索按钮,看到一条新消息:

Timestamp Source Message

2015-05-22 08:49:15.280 10.0.0.157 Hello there

说明GELF HTTP Input设置成功!!

- 时区和高亮设置

admin帐号的时区:

[root@logserver ~]# vi /etc/graylog/server/server.conf

---

30 root_timezone = Asia/Shanghai

---

[root@logserver ~]# /etc/init.d/graylog-server restart

其他帐号的默认时区:

[root@logserver ~]# vi /etc/graylog/web/web.conf

---

18 timezone="Asia/Shanghai"

---

[root@logserver ~]# /etc/init.d/graylog-web restart

允许查询结果高亮:

[root@logserver ~]# vi /etc/graylog/server/server.conf

---

147 allow_highlighting = true

---

[root@logserver ~]# /etc/init.d/graylog-server restart

- 修改css颜色补充

[root@logserver ~]# cp /usr/share/graylog-web/lib/graylog-web-interface.graylog-web-interface-1.1.4-assets.jar .

[root@logserver ~]# mkdir jar_tmp

[root@logserver ~]# cd jar_tmp

[root@logserver ~]# jar_tmp]$ jar xvf ../graylog-web-interface.graylog-web-interface-1.1.4-assets.jar

[root@logserver ~]# jar_tmp]$ vi public/stylesheets/graylog2.less

---

2347 font-family: monospace;

2348 color: #16ace3;

->

2347 /*font-family: monospace;*/

2348 /*color: #16ace3;*/

---

[root@logserver ~]# jar_tmp]$

jar cvfm graylog-web-interface.graylog-web-interface-1.1.4-assets.jar META-INF/MANIFEST.MF .

[root@logserver ~]# sudo /etc/init.d/graylog-web stop

[root@logserver ~]# cd /usr/share/graylog-web/lib/

[root@logserver ~]# lib]$ sudo mv graylog-web-interface.graylog-web-interface-1.1.4-assets.jar graylog-web-interface.graylog-web-interface-1.1.4-assets.jar.origin

[root@logserver ~]# lib]$ sudo cp ~/jar_tmp/graylog-web-interface.graylog-web-interface-1.1.4-assets.jar .

[root@logserver ~]# lib]$ sudo /etc/init.d/graylog-web start

- 移动数据目录

移动elasticsearch的数据目录

[root@logserver ~]# sudo /etc/init.d/elasticsearch stop

[root@logserver ~]# sudo cp -rp /var/lib/elasticsearch/ /data/

[root@logserver ~]# sudo vi /etc/sysconfig/elasticsearch

+16 DATA_DIR=/data/elasticsearch

[root@logserver ~]# sudo /etc/init.d/elasticsearch start

移动mongo的数据目录

[root@logserver ~]# sudo /etc/init.d/mongod stop

[root@logserver ~]# sudo cp -rp /var/lib/mongo /data/

[root@logserver ~]# sudo vi /etc/mongod.conf

---

13 dbpath=/var/lib/mongo

->

13 dbpath=/data/mongo

---

[mtagent@access2 ~]$ sudo /etc/init.d/mongod start

发送日志到Graylog服务器

使用http协议发送:

http://docs.graylog.org/en/1.1/pages/sending_data.html#gelf-via-http

curl -XPOST http://graylog.example.org:12202/gelf -p0 -d '{"short_message":"Hello there", "host":"example.org", "facility":"test", "_foo":"bar"}'

使用tcp协议发送

http://docs.graylog.org/en/1.1/pages/sending_data.html#raw-plaintext-inputs

echo "hello, graylog" | nc graylog.example.org 5555

结合inotifywait收集nginx日志

gather-nginx-log.sh

#!/bin/bash

app=nginx

node=$HOSTNAME

log_file=/var/log/nginx/nginx.log

graylog_server_ip=10.0.0.11

graylog_server_port=12201

while inotifywait -e modify $log_file; do

last_size=`cat ${app}.size`

curr_size=`stat -c%s $log_file`

echo $curr_size > ${app}.size

count=`echo "$curr_size-$last_size" | bc`

python read_log.py $log_file ${last_size} $count | sed 's/"/\\\\\"/g' > ${app}.new_lines

while read line

do

if echo "$line" | grep "^20[0-9][0-9]-[0-1][0-9]-[0-3][0-9]" > /dev/null; then

seconds=`echo "$line" | cut -d ' ' -f 6`

spend_ms=`echo "${seconds}*1000/1" | bc`

http_status=`echo "$line" | cut -d ' ' -f 2`

echo "http_status -- $http_status"

prefix_number=${http_status:0:1}

if [ "$prefix_number" == "5" ]; then

level=3 #ERROR

elif [ "$prefix_number" == "4" ]; then

level=4 #WARNING

elif [ "$prefix_number" == "3" ]; then

level=5 #NOTICE

elif [ "$prefix_number" == "2" ]; then

level=6 #INFO

elif [ "$prefix_number" == "1" ]; then

level=7 #DEBUG

fi

echo "level -- $level"

curl -XPOST http://${graylog_server_ip}:${graylog_server_port}/gelf -p0 -d "{\"short_mess

sage\":\"$line\", \"host\":\"${app}\", \"level\":${level}, \"_node\":\"${node}\", \"_spend_msecs\":$

{spend_ms}, \"_http_status\":${http_status}}"

echo "gathered -- $line"

fi

done < ${app}.new_lines

done

read_log.py

#!/usr/bin/python

#coding=utf-8

import sys

import os

if len(sys.argv) < 4:

print "Usage: %s /path/of/log/file print_from count" % (sys.argv[0])

print "Example: %s /var/log/syslog 90000 100" % (sys.argv[0])

sys.exit(1)

filename = sys.argv[1]

if (not os.path.isfile(filename)):

print "%s not existing!!!" % (filename)

sys.exit(1)

filesize = os.path.getsize(filename)

position = int(sys.argv[2])

if (filesize < position):

print "log file may cut by logrotate.d, print log from begin!" % (position,filesize)

position = 0

count = int(sys.argv[3])

fo = open(filename, "r")

fo.seek(position, 0)

content = fo.read(count)

print content.strip()

# Close opened file

fo.close()

5秒一次收集iotop日志,找出高速读写磁盘的进程

#!/bin/bash

app=iotop

node=$HOSTNAME

graylog_server_ip=10.0.0.11

graylog_server_port=12201

while true; do

sudo /usr/sbin/iotop -b -o -t -k -q -n2 | sed 's/"/\\\\\"/g' > /dev/shm/graylog_client.${app}.new_lines

while read line; do

if echo "$line" | grep "^[0-2][0-9]:[0-5][0-9]:[0-5][0-9]" > /dev/null; then

read -a WORDS <<< $line

epoch_seconds=`date --date="${WORDS[0]}" +%s.%N`

pid=${WORDS[1]}

read_float_kps=${WORDS[4]}

read_int_kps=${read_float_kps%.*}

write_float_kps=${WORDS[6]}

write_int_kps=${write_float_kps%.*}

command=${WORDS[12]}

if [ "$command" == "bash" ] && (( ${#WORDS[*]} > 13 )); then

pname=${WORDS[13]}

elif [ "$command" == "java" ] && (( ${#WORDS[*]} > 13 )); then

arg0=${WORDS[13]}

pname=${arg0#*=}

else

pname=$command

fi

curl --connect-timeout 1 -s -XPOST http://${graylog_server_ip}:${graylog_server_port}/gelf -p0 -d "{\"timestamp\":$epoch_seconds, \"short_message\":\"${line::200}\", \"full_message\":\"$line\", \"host\":\"${app}\", \"_node\":\"${node}\", \"_pid\":${pid}, \"_read_kps\":${read_int_kps}, \"_write_kps\":${write_int_kps}, \"_pname\":\"${pname}\"}"

fi

done < /dev/shm/graylog_client.${app}.new_lines

sleep 4

done

收集android app日志

device.env

export device=4b13c85c

export app=com.tencent.mm

export filter="\( I/ServerAsyncTask2(\| W/\| E/\)"

export graylog_server_ip=10.0.0.11

export graylog_server_port=12201

adblog.sh

#!/bin/bash

. ./device.env

adb -s $device logcat -v time *:I | tee -a adb.log

ga-androidapp-log.sh

#!/bin/bash

. ./device.env

log_file=./adb.log

node=$device

if [ ! -f $log_file ]; then

echo $log_file not exist!!

echo 0 > ${app}.size

exit 1

fi

if [ ! -f ${app}.size ]; then

curr_size=`stat -c%s $log_file`

echo $curr_size > ${app}.size

fi

while inotifywait -qe modify $log_file > /dev/null; do

last_size=`cat ${app}.size`

curr_size=`stat -c%s $log_file`

echo $curr_size > ${app}.size

pids=`./getpids.py $app $device`

if [ "$pids" == "" ]; then

continue

fi

count=`echo "$curr_size-$last_size" | bc`

python read_log.py $log_file ${last_size} $count | grep "$pids" | sed 's/"/\\\\\"/g' | sed 's/\t/ /g' > ${app}.new_lines

#echo "${app}.new_lines lines: `wc -l ${app}.new_lines`"

while read line

do

if echo "$line" | grep "$filter" > /dev/null; then

priority=${line:19:1}

if [ "$priority" == "F" ]; then

level=1 #ALERT

elif [ "$priority" == "E" ]; then

level=3 #ERROR

elif [ "$priority" == "W" ]; then

level=4 #WARNING

elif [ "$priority" == "I" ]; then

level=6 #INFO

fi

#echo "level -- $level"

curl -XPOST http://${graylog_server_ip}:${graylog_server_port}/gelf -p0 -d "{\"short_message\":\"$line\", \"host\":\"${app}\", \"level\":${level}, \"_node\":\"${node}\"}"

echo "GATHERED -- $line"

#else

#echo "ignored -- $line"

fi

done < ${app}.new_lines

done

get_pids.py

#!/usr/bin/python

import sys

import os

import commands

if __name__ == "__main__":

if len(sys.argv) != 3:

print sys.argv[0]+" packageName device"

sys.exit()

device = sys.argv[2]

cmd = "adb -s "+device+" shell ps | grep "+sys.argv[1]+" | cut -c11-15"

output = commands.getoutput(cmd)

if output == "":

sys.exit()

originpids = output.split("\n")

strippids = map((lambda pid: int(pid,10)), originpids)

pids = map((lambda pid: "%5d" %pid), strippids)

pattern = "\(("+")\|(".join(pids)+")\)"

print pattern

graylog启动脚本

[root@logserver init.d]$ cat /etc/init.d/graylog-server

#! /bin/sh

#

# graylog-server Starts/stop the "graylog-server" daemon

#

# chkconfig: - 95 5

# description: Runs the graylog-server daemon

### BEGIN INIT INFO

# Provides: graylog-server

# Required-Start: $network $named $remote_fs $syslog

# Required-Stop: $network $named $remote_fs $syslog

# Default-Start: 2 3 4 5

# Default-Stop: 0 1 6

# Short-Description: Graylog Server

# Description: Graylog Server - Search your logs, create charts, send reports and be alerted when something happens.

### END INIT INFO

# Author: Lee Briggs <[email protected]>

# Contributor: Sandro Roth <[email protected]>

# Contributor: Bernd Ahlers <[email protected]>

# Source function library.

. /etc/rc.d/init.d/functions

RETVAL=0

PATH=/usr/local/sbin:/usr/local/bin:/sbin:/bin:/usr/sbin:/usr/bin

DESC="Graylog Server"

NAME=graylog-server

JAR_FILE=/usr/share/graylog-server/graylog.jar

JAVA=/usr/bin/java

PID_DIR=/var/run/graylog-server

PID_FILE=$PID_DIR/$NAME.pid

JAVA_ARGS="-jar -Djava.library.path=/usr/share/graylog-server/lib/sigar -Dlog4j.configuration=file:///etc/graylog/server/log4j.xml $JAR_FILE server -p $PID_FILE -f /etc/graylog/server/server.conf"

SCRIPTNAME=/etc/init.d/$NAME

LOCKFILE=/var/lock/subsys/$NAME

GRAYLOG_SERVER_USER=graylog

GRAYLOG_SERVER_JAVA_OPTS=""

# Pull in sysconfig settings

[ -f /etc/sysconfig/${NAME} ] && . /etc/sysconfig/${NAME}

# Exit if the package is not installed

[ -e "$JAR_FILE" ] || exit 0

[ -x "$JAVA" ] || exit 0

start() {

echo -n $"Starting ${NAME}: "

install -d -m 755 -o $GRAYLOG_SERVER_USER -g $GRAYLOG_SERVER_USER -d $PID_DIR

daemon --check $JAVA --pidfile=${PID_FILE} --user=${GRAYLOG_SERVER_USER} \

"$GRAYLOG_COMMAND_WRAPPER $JAVA $GRAYLOG_SERVER_JAVA_OPTS $JAVA_ARGS $GRAYLOG_SERVER_ARGS &"

RETVAL=$?

sleep 2

[ $RETVAL = 0 ] && touch ${LOCKFILE}

echo

return $RETVAL

}

stop() {

echo -n $"Stopping ${NAME}: "

killproc -p ${PID_FILE} -d 10 $JAVA

RETVAL=$?

[ $RETVAL = 0 ] && rm -f ${PID_FILE} && rm -f ${LOCKFILE}

echo

return $RETVAL

}

case "$1" in

start)

start

;;

stop)

stop

;;

status)

status -p ${PID_FILE} $NAME

RETVAL=$?

;;

restart|force-reload)

stop

start

;;

*)

N=/etc/init.d/${NAME}

echo "Usage: $N {start|stop|status|restart|force-reload}" >&2

RETVAL=2

;;

esac

exit $RETVAL

[root@logserver init.d]$ cat /etc/init.d/graylog-web

#! /bin/sh

#

# graylog-web Starts/stop the "graylog-web" application

#

# chkconfig: - 99 1

# description: Runs the graylog-web application

### BEGIN INIT INFO

# Provides: graylog-web

# Required-Start: $network $named $remote_fs $syslog

# Required-Stop: $network $named $remote_fs $syslog

# Default-Start: 2 3 4 5

# Default-Stop: 0 1 6

# Short-Description: Graylog Web

# Description: Graylog Web - Search your logs, create charts, send reports and be alerted when something happens.

### END INIT INFO

# Author: Lee Briggs <[email protected]>

# Contributor: Bernd Ahlers <[email protected]>

# Some default settings.

GRAYLOG_WEB_HTTP_ADDRESS="0.0.0.0"

GRAYLOG_WEB_HTTP_PORT="9000"

GRAYLOG_WEB_USER="graylog-web"

# Source function library.

. /etc/rc.d/init.d/functions

RETVAL=0

PATH=/usr/local/sbin:/usr/local/bin:/sbin:/bin:/usr/sbin:/usr/bin

DESC="Graylog Web"

NAME=graylog-web

CMD=/usr/share/graylog-web/bin/graylog-web-interface

PID_FILE=/var/lib/graylog-web/application.pid

CONF_FILE=/etc/graylog/web/web.conf

SCRIPTNAME=/etc/init.d/$NAME

LOCKFILE=/var/lock/subsys/$NAME

RUN=yes

# Pull in sysconfig settings

[ -f /etc/sysconfig/graylog-web ] && . /etc/sysconfig/graylog-web

# Exit if the package is not installed

[ -e "$CMD" ] || exit 0

start() {

echo -n $"Starting ${NAME}: "

daemon --user=$GRAYLOG_WEB_USER --pidfile=${PID_FILE} \

"nohup $GRAYLOG_COMMAND_WRAPPER $CMD -Dconfig.file=${CONF_FILE} \

-Dlogger.file=/etc/graylog/web/logback.xml \

-Dpidfile.path=$PID_FILE \

-Dhttp.address=$GRAYLOG_WEB_HTTP_ADDRESS \

-Dhttp.port=$GRAYLOG_WEB_HTTP_PORT \

$GRAYLOG_WEB_JAVA_OPTS $GRAYLOG_WEB_ARGS > /var/log/graylog-web/console.log 2>&1 &"

RETVAL=$?

sleep 2

[ $RETVAL = 0 ] && touch ${LOCKFILE}

echo

return $RETVAL

}

stop() {

echo -n $"Stopping ${NAME}: "

killproc -p ${PID_FILE} -d 10 $CMD

RETVAL=$?

[ $RETVAL = 0 ] && rm -f ${PID_FILE} && rm -f ${LOCKFILE}

echo

return $RETVAL

}

case "$1" in

start)

start

;;

stop)

stop

;;

status)

status -p ${PID_FILE} $NAME

RETVAL=$?

;;

restart|force-reload)

stop

start

;;

*)

N=/etc/init.d/${NAME}

echo "Usage: $N {start|stop|status|restart|force-reload}" >&2

RETVAL=2

;;

esac

exit $RETVAL

[root@logserver init.d]$ cat /etc/graylog/server/

log4j.xml node-id server.conf

[root@logserver init.d]$ cat /etc/graylog/server/server.conf

# If you are running more than one instances of graylog2-server you have to select one of these

# instances as master. The master will perform some periodical tasks that non-masters won't perform.

is_master = true

# The auto-generated node ID will be stored in this file and read after restarts. It is a good idea

# to use an absolute file path here if you are starting graylog2-server from init scripts or similar.

node_id_file = /etc/graylog/server/node-id

# You MUST set a secret to secure/pepper the stored user passwords here. Use at least 64 characters.

# Generate one by using for example: pwgen -N 1 -s 96

password_secret = Us5hAey50eHzfJSqrnhUnLv8k8I2QV1JbPcNLVRtZ2lZdLF9b5G2jSYflZMc41IaoD4BEH59Zi9Gkplq0nhWvtxUrLFjsyqe

# The default root user is named 'admin'

#root_username = admin

# You MUST specify a hash password for the root user (which you only need to initially set up the

# system and in case you lose connectivity to your authentication backend)

# This password cannot be changed using the API or via the web interface. If you need to change it,

# modify it in this file.

# Create one by using for example: echo -n yourpassword | shasum -a 256

# and put the resulting hash value into the following line

root_password_sha2 = 554752816c3d52806a0cf8b81c8c32533bd4648eda8401dc90369225f1938b6c

# The email address of the root user.

# Default is empty

#root_email = ""

# The time zone setting of the root user.

# Default is UTC

root_timezone = Asia/Shanghai

# Set plugin directory here (relative or absolute)

plugin_dir = /usr/share/graylog-server/plugin

# REST API listen URI. Must be reachable by other graylog2-server nodes if you run a cluster.

rest_listen_uri = http://127.0.0.1:12900/

# REST API transport address. Defaults to the value of rest_listen_uri. Exception: If rest_listen_uri

# is set to a wildcard IP address (0.0.0.0) the first non-loopback IPv4 system address is used.

# If set, his will be promoted in the cluster discovery APIs, so other nodes may try to connect on

# this address and it is used to generate URLs addressing entities in the REST API. (see rest_listen_uri)

# You will need to define this, if your Graylog server is running behind a HTTP proxy that is rewriting

# the scheme, host name or URI.

#rest_transport_uri = http://192.168.1.1:12900/

# Enable CORS headers for REST API. This is necessary for JS-clients accessing the server directly.

# If these are disabled, modern browsers will not be able to retrieve resources from the server.

# This is disabled by default. Uncomment the next line to enable it.

#rest_enable_cors = true

# Enable GZIP support for REST API. This compresses API responses and therefore helps to reduce

# overall round trip times. This is disabled by default. Uncomment the next line to enable it.

#rest_enable_gzip = true

# Enable HTTPS support for the REST API. This secures the communication with the REST API with

# TLS to prevent request forgery and eavesdropping. This is disabled by default. Uncomment the

# next line to enable it.

#rest_enable_tls = true

# The X.509 certificate file to use for securing the REST API.

#rest_tls_cert_file = /path/to/graylog2.crt

# The private key to use for securing the REST API.

#rest_tls_key_file = /path/to/graylog2.key

# The password to unlock the private key used for securing the REST API.

#rest_tls_key_password = secret

# The maximum size of a single HTTP chunk in bytes.

#rest_max_chunk_size = 8192

# The maximum size of the HTTP request headers in bytes.

#rest_max_header_size = 8192

# The maximal length of the initial HTTP/1.1 line in bytes.

#rest_max_initial_line_length = 4096

# The size of the execution handler thread pool used exclusively for serving the REST API.

#rest_thread_pool_size = 16

# The size of the worker thread pool used exclusively for serving the REST API.

#rest_worker_threads_max_pool_size = 16

# Embedded Elasticsearch configuration file

# pay attention to the working directory of the server, maybe use an absolute path here

#elasticsearch_config_file = /etc/graylog/server/elasticsearch.yml

# Graylog will use multiple indices to store documents in. You can configured the strategy it uses to determine

# when to rotate the currently active write index.

# It supports multiple rotation strategies:

# - "count" of messages per index, use elasticsearch_max_docs_per_index below to configure

# - "size" per index, use elasticsearch_max_size_per_index below to configure

# valid values are "count", "size" and "time", default is "count"

rotation_strategy = count

# (Approximate) maximum number of documents in an Elasticsearch index before a new index

# is being created, also see no_retention and elasticsearch_max_number_of_indices.

# Configure this if you used 'rotation_strategy = count' above.

elasticsearch_max_docs_per_index = 20000000

# (Approximate) maximum size in bytes per Elasticsearch index on disk before a new index is being created, also see

# no_retention and elasticsearch_max_number_of_indices. Default is 1GB.

# Configure this if you used 'rotation_strategy = size' above.

#elasticsearch_max_size_per_index = 1073741824

# (Approximate) maximum time before a new Elasticsearch index is being created, also see

# no_retention and elasticsearch_max_number_of_indices. Default is 1 day.

# Configure this if you used 'rotation_strategy = time' above.

# Please note that this rotation period does not look at the time specified in the received messages, but is

# using the real clock value to decide when to rotate the index!

# Specify the time using a duration and a suffix indicating which unit you want:

# 1w = 1 week

# 1d = 1 day

# 12h = 12 hours

# Permitted suffixes are: d for day, h for hour, m for minute, s for second.

#elasticsearch_max_time_per_index = 1d

# Disable checking the version of Elasticsearch for being compatible with this Graylog release.

# WARNING: Using Graylog with unsupported and untested versions of Elasticsearch may lead to data loss!

#elasticsearch_disable_version_check = true

# Disable message retention on this node, i. e. disable Elasticsearch index rotation.

#no_retention = false

# How many indices do you want to keep?

elasticsearch_max_number_of_indices = 20

# Decide what happens with the oldest indices when the maximum number of indices is reached.

# The following strategies are availble:

# - delete # Deletes the index completely (Default)

# - close # Closes the index and hides it from the system. Can be re-opened later.

retention_strategy = delete

# How many Elasticsearch shards and replicas should be used per index? Note that this only applies to newly created indices.

elasticsearch_shards = 4

elasticsearch_replicas = 0

# Prefix for all Elasticsearch indices and index aliases managed by Graylog.

elasticsearch_index_prefix = graylog2

# Do you want to allow searches with leading wildcards? This can be extremely resource hungry and should only

# be enabled with care. See also: https://www.graylog.org/documentation/general/queries/

allow_leading_wildcard_searches = false

# Do you want to allow searches to be highlighted? Depending on the size of your messages this can be memory hungry and

# should only be enabled after making sure your Elasticsearch cluster has enough memory.

allow_highlighting = true

# settings to be passed to elasticsearch's client (overriding those in the provided elasticsearch_config_file)

# all these

# this must be the same as for your Elasticsearch cluster

elasticsearch_cluster_name = graylog

# you could also leave this out, but makes it easier to identify the graylog2 client instance

#elasticsearch_node_name = graylog2-server

# we don't want the graylog2 server to store any data, or be master node

#elasticsearch_node_master = false

#elasticsearch_node_data = false

# use a different port if you run multiple Elasticsearch nodes on one machine

#elasticsearch_transport_tcp_port = 9350

# we don't need to run the embedded HTTP server here

#elasticsearch_http_enabled = false

#elasticsearch_discovery_zen_ping_multicast_enabled = false

#elasticsearch_discovery_zen_ping_unicast_hosts = 192.168.1.203:9300

# Change the following setting if you are running into problems with timeouts during Elasticsearch cluster discovery.

# The setting is specified in milliseconds, the default is 5000ms (5 seconds).

#elasticsearch_cluster_discovery_timeout = 5000

# the following settings allow to change the bind addresses for the Elasticsearch client in graylog2

# these settings are empty by default, letting Elasticsearch choose automatically,

# override them here or in the 'elasticsearch_config_file' if you need to bind to a special address

# refer to http://www.elasticsearch.org/guide/en/elasticsearch/reference/0.90/modules-network.html

# for special values here

#elasticsearch_network_host =

#elasticsearch_network_bind_host =

#elasticsearch_network_publish_host =

# The total amount of time discovery will look for other Elasticsearch nodes in the cluster

# before giving up and declaring the current node master.

#elasticsearch_discovery_initial_state_timeout = 3s

# Analyzer (tokenizer) to use for message and full_message field. The "standard" filter usually is a good idea.

# All supported analyzers are: standard, simple, whitespace, stop, keyword, pattern, language, snowball, custom

# Elasticsearch documentation: http://www.elasticsearch.org/guide/reference/index-modules/analysis/

# Note that this setting only takes effect on newly created indices.

elasticsearch_analyzer = standard

# Batch size for the Elasticsearch output. This is the maximum (!) number of messages the Elasticsearch output

# module will get at once and write to Elasticsearch in a batch call. If the configured batch size has not been

# reached within output_flush_interval seconds, everything that is available will be flushed at once. Remember

# that every outputbuffer processor manages its own batch and performs its own batch write calls.

# ("outputbuffer_processors" variable)

output_batch_size = 500

# Flush interval (in seconds) for the Elasticsearch output. This is the maximum amount of time between two

# batches of messages written to Elasticsearch. It is only effective at all if your minimum number of messages

# for this time period is less than output_batch_size * outputbuffer_processors.

output_flush_interval = 1

# As stream outputs are loaded only on demand, an output which is failing to initialize will be tried over and

# over again. To prevent this, the following configuration options define after how many faults an output will

# not be tried again for an also configurable amount of seconds.

output_fault_count_threshold = 5

output_fault_penalty_seconds = 30

# The number of parallel running processors.

# Raise this number if your buffers are filling up.

processbuffer_processors = 5

outputbuffer_processors = 3

#outputbuffer_processor_keep_alive_time = 5000

#outputbuffer_processor_threads_core_pool_size = 3

#outputbuffer_processor_threads_max_pool_size = 30

# UDP receive buffer size for all message inputs (e. g. SyslogUDPInput).

#udp_recvbuffer_sizes = 1048576

# Wait strategy describing how buffer processors wait on a cursor sequence. (default: sleeping)

# Possible types:

# - yielding

# Compromise between performance and CPU usage.

# - sleeping

# Compromise between performance and CPU usage. Latency spikes can occur after quiet periods.

# - blocking

# High throughput, low latency, higher CPU usage.

# - busy_spinning

# Avoids syscalls which could introduce latency jitter. Best when threads can be bound to specific CPU cores.

processor_wait_strategy = blocking

# Size of internal ring buffers. Raise this if raising outputbuffer_processors does not help anymore.

# For optimum performance your LogMessage objects in the ring buffer should fit in your CPU L3 cache.

# Start server with --statistics flag to see buffer utilization.

# Must be a power of 2. (512, 1024, 2048, ...)

ring_size = 65536

inputbuffer_ring_size = 65536

inputbuffer_processors = 2

inputbuffer_wait_strategy = blocking

# Enable the disk based message journal.

message_journal_enabled = true

# The directory which will be used to store the message journal. The directory must me exclusively used by Graylog and

# must not contain any other files than the ones created by Graylog itself.

message_journal_dir = /var/lib/graylog-server/journal

# Journal hold messages before they could be written to Elasticsearch.

# For a maximum of 12 hours or 5 GB whichever happens first.

# During normal operation the journal will be smaller.

#message_journal_max_age = 12h

#message_journal_max_size = 5gb

#message_journal_flush_age = 1m

#message_journal_flush_interval = 1000000

#message_journal_segment_age = 1h

#message_journal_segment_size = 100mb

# Number of threads used exclusively for dispatching internal events. Default is 2.

#async_eventbus_processors = 2

# EXPERIMENTAL: Dead Letters

# Every failed indexing attempt is logged by default and made visible in the web-interface. You can enable

# the experimental dead letters feature to write every message that was not successfully indexed into the

# MongoDB "dead_letters" collection to make sure that you never lose a message. The actual writing of dead

# letter should work fine already but it is not heavily tested yet and will get more features in future

# releases.

dead_letters_enabled = false

# How many seconds to wait between marking node as DEAD for possible load balancers and starting the actual

# shutdown process. Set to 0 if you have no status checking load balancers in front.

lb_recognition_period_seconds = 3

# Every message is matched against the configured streams and it can happen that a stream contains rules which

# take an unusual amount of time to run, for example if its using regular expressions that perform excessive backtracking.

# This will impact the processing of the entire server. To keep such misbehaving stream rules from impacting other

# streams, Graylog limits the execution time for each stream.

# The default values are noted below, the timeout is in milliseconds.

# If the stream matching for one stream took longer than the timeout value, and this happened more than "max_faults" times

# that stream is disabled and a notification is shown in the web interface.

#stream_processing_timeout = 2000

#stream_processing_max_faults = 3

# Length of the interval in seconds in which the alert conditions for all streams should be checked

# and alarms are being sent.

#alert_check_interval = 60

# Since 0.21 the graylog2 server supports pluggable output modules. This means a single message can be written to multiple

# outputs. The next setting defines the timeout for a single output module, including the default output module where all

# messages end up.

#

# Time in milliseconds to wait for all message outputs to finish writing a single message.

#output_module_timeout = 10000

# Time in milliseconds after which a detected stale master node is being rechecked on startup.

#stale_master_timeout = 2000

# Time in milliseconds which Graylog is waiting for all threads to stop on shutdown.

#shutdown_timeout = 30000

# MongoDB Configuration

mongodb_useauth = false

#mongodb_user = grayloguser

#mongodb_password = 123

mongodb_host = 127.0.0.1

#mongodb_replica_set = localhost:27017,localhost:27018,localhost:27019

mongodb_database = graylog2

mongodb_port = 27017

# Raise this according to the maximum connections your MongoDB server can handle if you encounter MongoDB connection problems.

mongodb_max_connections = 100

# Number of threads allowed to be blocked by MongoDB connections multiplier. Default: 5

# If mongodb_max_connections is 100, and mongodb_threads_allowed_to_block_multiplier is 5, then 500 threads can block. More than that and an exception will be thrown.

# http://api.mongodb.org/java/current/com/mongodb/MongoOptions.html#threadsAllowedToBlockForConnectionMultiplier

mongodb_threads_allowed_to_block_multiplier = 5

# Drools Rule File (Use to rewrite incoming log messages)

# See: https://www.graylog.org/documentation/general/rewriting/

#rules_file = /etc/graylog/server/rules.drl

# Email transport

#transport_email_enabled = false

#transport_email_hostname = mail.example.com

#transport_email_port = 587

#transport_email_use_auth = true

#transport_email_use_tls = true

#transport_email_use_ssl = true

#transport_email_auth_username = [email protected]

#transport_email_auth_password = secret

#transport_email_subject_prefix = [graylog2]

#transport_email_from_email = [email protected]

# Specify and uncomment this if you want to include links to the stream in your stream alert mails.

# This should define the fully qualified base url to your web interface exactly the same way as it is accessed by your users.

#transport_email_web_interface_url = https://graylog2.example.com

# HTTP proxy for outgoing HTTP calls

#http_proxy_uri =

# Disable the optimization of Elasticsearch indices after index cycling. This may take some load from Elasticsearch

# on heavily used systems with large indices, but it will decrease search performance. The default is to optimize

# cycled indices.

#disable_index_optimization = true

# Optimize the index down to <= index_optimization_max_num_segments. A higher number may take some load from Elasticsearch

# on heavily used systems with large indices, but it will decrease search performance. The default is 1.

#index_optimization_max_num_segments = 1

# Disable the index range calculation on all open/available indices and only calculate the range for the latest

# index. This may speed up index cycling on systems with large indices but it might lead to wrong search results

# in regard to the time range of the messages (i. e. messages within a certain range may not be found). The default

# is to calculate the time range on all open/available indices.

#disable_index_range_calculation = true

# The threshold of the garbage collection runs. If GC runs take longer than this threshold, a system notification

# will be generated to warn the administrator about possible problems with the system. Default is 1 second.

#gc_warning_threshold = 1s

# Connection timeout for a configured LDAP server (e. g. ActiveDirectory) in milliseconds.

#ldap_connection_timeout = 2000

# https://github.com/bazhenov/groovy-shell-server

#groovy_shell_enable = false

#groovy_shell_port = 6789

# Enable collection of Graylog-related metrics into MongoDB

#enable_metrics_collection = false

# Disable the use of SIGAR for collecting system stats

#disable_sigar = false

[root@logserver init.d]$ cat /etc/graylog/web/web.conf

# graylog2-server REST URIs (one or more, comma separated) For example: "http://127.0.0.1:12900/,http://127.0.0.1:12910/"

graylog2-server.uris="http://127.0.0.1:12900/"

# Learn how to configure custom logging in the documentation:

# https://www.graylog.org/documentation/setup/webinterface/

# Secret key

# ~~~~~

# The secret key is used to secure cryptographics functions. Set this to a long and randomly generated string.

# If you deploy your application to several instances be sure to use the same key!

# Generate for example with: pwgen -N 1 -s 96

application.secret="Vio48oiufs4TD6XBN0PXZT2FvPmfs1L3BvbByvo7Pwwz7mUyR0HUlMspNxdQ8dKdHpSwmh67cbkISlPs9cmzqTkJXVHFrI9P"

# Web interface timezone

# Graylog stores all timestamps in UTC. To properly display times, set the default timezone of the interface.

# If you leave this out, Graylog will pick your system default as the timezone. Usually you will want to configure it explicitly.

# timezone="Europe/Berlin"

timezone="Asia/Shanghai"

# Message field limit

# Your web interface can cause high load in your browser when you have a lot of different message fields. The default

# limit of message fields is 100. Set it to 0 if you always want to get all fields. They are for example used in the

# search result sidebar or for autocompletion of field names.

field_list_limit=100

# Use this to run Graylog with a path prefix

#application.context=/graylog2

# You usually do not want to change this.

application.global=lib.Global